AI cameras & the PRIS Act: Why the AI-powered traffic camera debate matters

22 Apr 2026

As the Privacy and Responsible Information Sharing Act 2024 (WA) (PRIS Act) approaches its commencement date of 1 July 2026, Western Australian government agencies must pivot from a purely operational focus to a robust ‘privacy-by-design’ framework.

Nowhere is this shift more visible – or seemingly more contested – than in the use of road safety cameras with AI detection software. While AI-powered cameras promise significant road-safety benefits, their reliance on automated data processing places them directly under the microscope of the new legislation.

For WA agencies, the (ever decreasing) transition period, between now and 1 July 2026, is critical for aligning used of AI with the upcoming standards of accountability and transparency.

Internal Governance Challenge: Beyond the ‘Black Box’

AI camera systems in Western Australia have, to date, functioned primarily as enforcement aids.

They are enabled under existing road‑traffic enforcement legislation, with AI treated as a technical method of detecting offences. However, enforcement aids are not exempt from privacy and surveillance laws, and from 1 July 2026, they will also be subject to the PRIS Act.

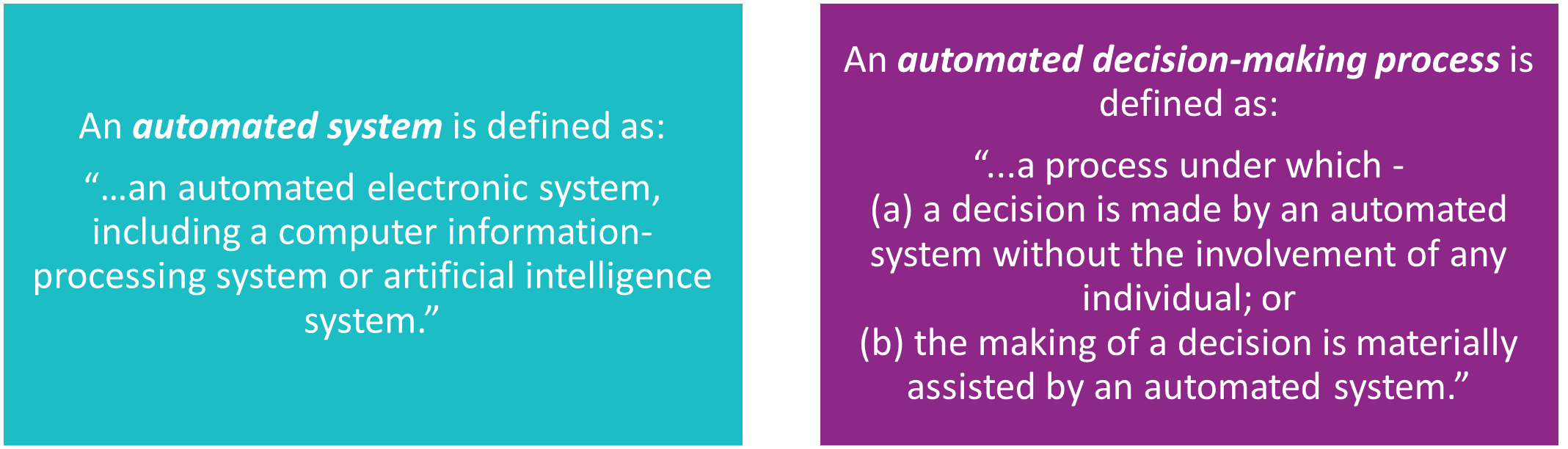

Of particular significance, the PRIS Act will introduce Information Privacy Principle 10 (IPP 10), which specifically governs Automated Decision-Making (ADM).

Compliance with IPP 10 will require, among other things, that agencies:

conduct an assessment of the impact of the ADM process on the identified individuals, and specifically a ‘privacy impact assessment’ where the collection of biometric and interior vehicle data results in a “high privacy impact” activity; and

- ensure transparency around the use of ADM processes and provide mechanisms for individuals to request human involvement in decisions affecting them.

When Automation Goes Wrong: Why the Debate Matters

Recent headlines have highlighted a growing number of infringement notices being reviewed, withdrawn or overturned due to identification and contextual errors. The recent case of Ms Milly Bartlett serves as a useful case study. Ms Bartlett received an infringement notice despite the photo clearly showing her wearing her seatbelt. The AI software failed to distinguish the belt due to strong sun reflection making the belt appear white and ‘blending in’ with her top in the black-and-white image.

While this matter was resolved under the existing framework, it raises a more difficult question: how would such a system be assessed under the PRIS Act?

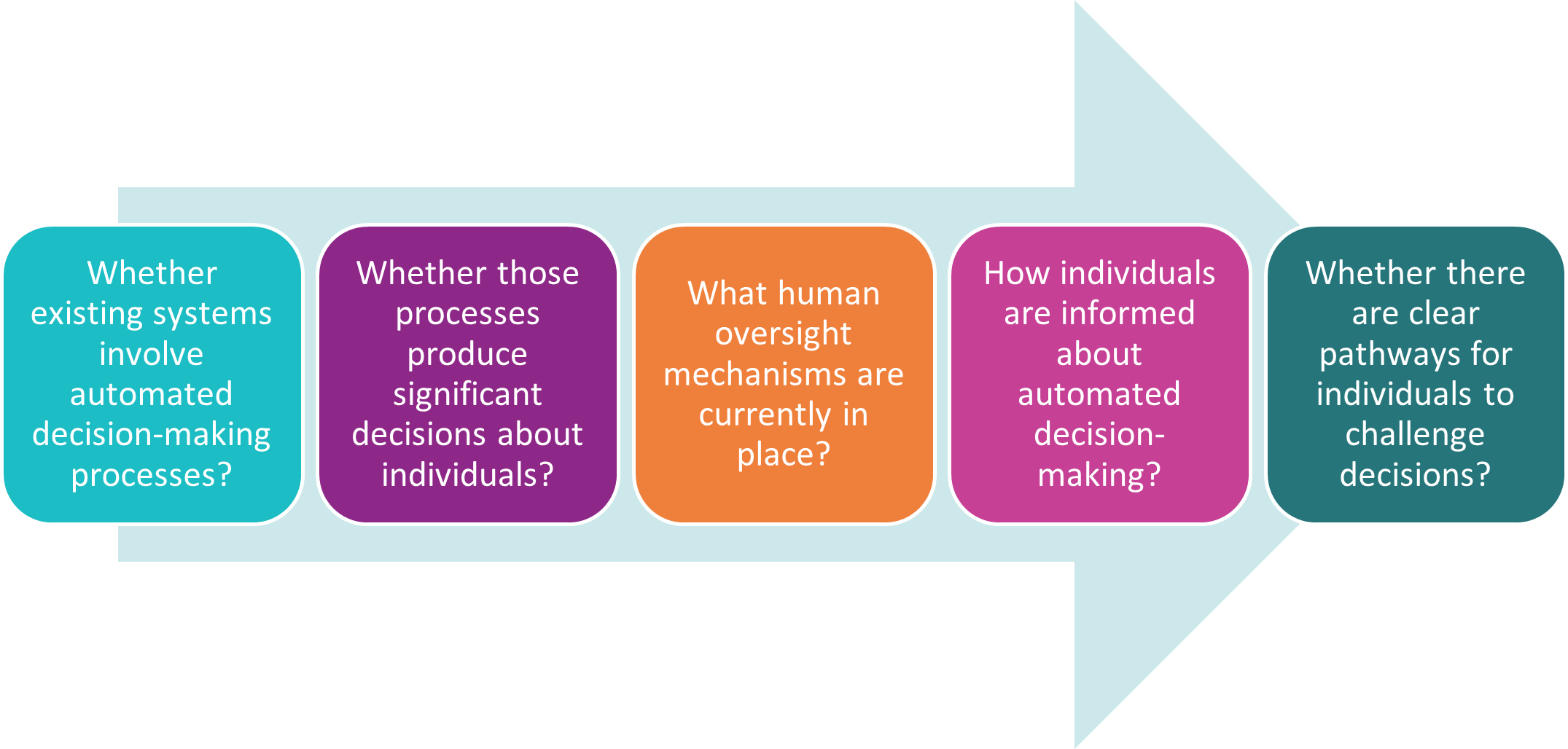

Had IPP 10 been in force, it would have required certain steps to be taken before enabling the automated process, and to provide Ms Bartlett with enforceable rights once it was used against her. Although detailed regulatory guidance is still developing, the direction is clear. Governance arrangements must address:

- transparency about the use of automated systems;

- the level of human oversight or review in the decision-making process;

- mechanisms for individuals to challenge or seek review of automated decisions.

Preparing for 1 July 2026

The PRIS Act is more than a ‘check-box’ compliance exercise; it signals a modernisation of the social contract between government agencies and the individuals they serve. It is not simply about tightening data-handling rules, but about embedding accountability, requiring genuine transparency in the use of automated systems, and ensuring individuals have meaningful avenues for recourse when those systems affect them.

In particular, agencies should begin considering:

With stronger governance and a commitment to genuine openness, WA agencies can ensure that AI supports public safety rather than becoming a source of legal and ethical tension.

This article was written by Ariel Bastian Senior Associate and Anna Kosterich Lawyer Corporate Commercial.